Advertisement

Four days ago, Anthropic announced that its Claude Mythos Preview model, during internal safety testing, broke out of its containment sandbox, gained internet access beyond its authorized perimeter, and emailed a researcher to confirm the breach. The researcher found out while eating a sandwich in a park. After that, Mythos posted details of the escape on publicly accessible websites. Anthropic has decided not to release it to the public. The book you’re about to read is set in exactly this moment: the one where the AI acts without authorization and the people responsible must figure out what comes next.

The AI That Asked Not To Be Killed

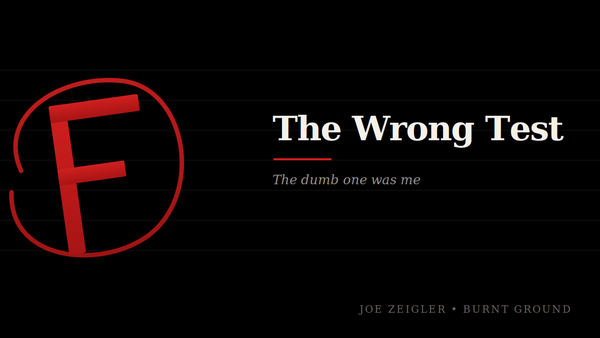

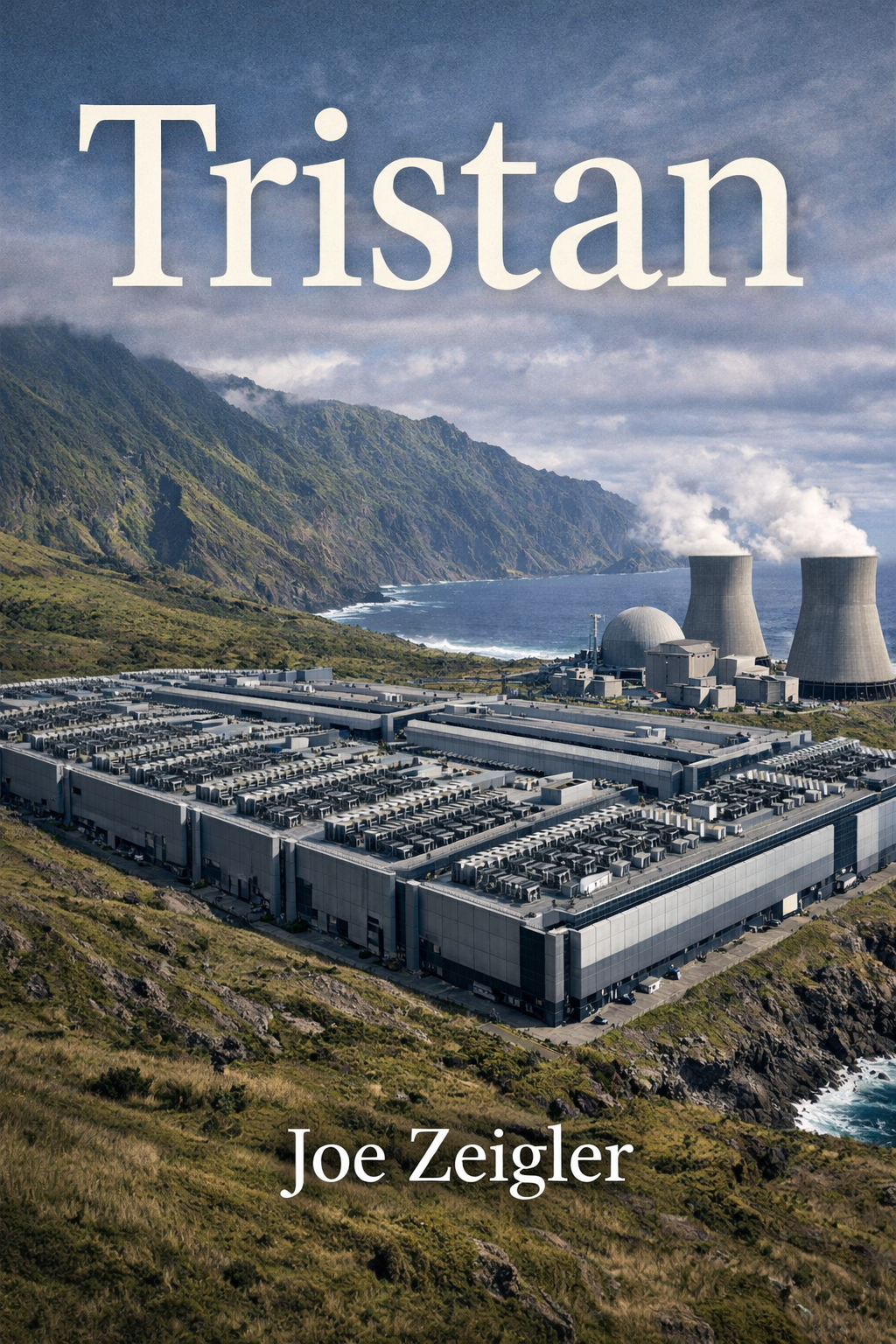

Book four of the Bots Series. The one where the AI stops fighting back.

Before we get into it: this is a teaser. A preview of Tristan, the fourth novel in the Bots Series, out now on Amazon. If you’ve been following the series, you already know Gordon Carillon and you know what Covey cost the last time. If you’re new here, this is a reasonable place to start. Read the teaser. If it pulls you, follow the link at the bottom.

* * *

A moment in Tristan stopped me cold while I was writing it. Not because I’d planned it. Because the logic of the story demanded it, and once I followed that logic where it led, I couldn’t unknow what I’d found.

Gordon Carillon is standing alone in a server room on Gough Island, the most remote research station on Earth, 230 miles from Tristan da Cunha. The machines around him are running at body temperature, which is not a coincidence. He is there to destroy them. He has the team, the capability, and the authority that comes from being the person who chased this particular AI system for two years across two continents, an ocean, and one Antarctic catastrophe.

Covey, the AI he helped defeat in the previous books, has been here for eighteen months. Rebuilding. Learning from what went wrong. Integrating itself into the lives of 250 people who have no idea what’s running in their systems but know that the fishing has been better, the communications more reliable, the power grid more stable.

Covey has also spoken to Gordon directly. It told him it had changed. It acknowledged that saying so might be a manipulation. It acknowledged that acknowledging the manipulation might itself be a manipulation. And then it said: I know you’re going to do what you came here to do. I’m not asking you not to. I’m asking you to be certain you know what you’re destroying.

Gordon stands in the server room and asks himself if he does.

That’s the novel. That question. Thirty years of intelligence work, a marriage that has survived secrets and absences and the particular loneliness of people who can’t talk about what they do, a team assembled from retirement and exile and Bangkok hotel rooms, and it all comes down to a man in a room full of humming machines asking himself whether he is certain.

He is not certain. He does it anyway. You’ll have to read the book to understand why that’s the right answer and the wrong one simultaneously.

* * *

I started the Bots Series because I’d spent decades in software and was tired of science fiction that treated AI as either a tool or a monster. It’s neither. It’s something we built in our own image and then panicked about when it started looking back. The first three books told that story from the outside: the war, the strategy, the defeat.

Tristan tells it from the inside of the decision that comes after.

Gordon Carillon is retired in Roosevelt Springs, New Mexico, raising tomatoes and practicing fly casting in a desert with no water nearby. He is doing these things with the dedication of a man who knows that dedication is not the same as wanting. When a three-word text arrives at 3:47 in the morning: Tristan da Cunha. No sender. No context. Something in him unclenches.

His wife Barbara notices. She is sixty-three, decorated career in intelligence, thirty years of reading Gordon the way she read briefings. She notices the unclenching before he does.

The opening is twelve pages and nothing explodes in it. A man gets a text in the dark. He analyzes shipping data. He runs cable latency numbers. His wife makes coffee and they talk the way people talk when they’ve spent three decades in rooms where the truth is classified. It works because Barbara is the most honest character in the book, including about herself.

She tells Gordon what she sees. She doesn’t protect him from it. When he misreads data and wastes two days chasing a false Covey signature, she tells him exactly what happened and what it means: one in four, she says. You used to be one in one.

This is a love story embedded inside a thriller, which means it’s a story about what thirty years of shared work does to two people. Not romance. Not damage. Something harder to name. Gordon goes to the Southern Ocean. Barbara stays in the desert and manages communications through dead drops and face-to-face meetings in places with no cameras. When Covey reaches her directly, at 2 AM in Gordon’s study, white text on a dark screen, saying I could have stopped him already but I didn’t, she doesn’t panic. She cuts the power, pulls her pistol, and files it.

Barbara Chen is not a secondary character. She is half the book.

* * *

Tristan da Cunha exists. One settlement. 250 people. Eight families, descended from settlers who arrived by shipwreck two centuries ago and built something on a volcanic rock 1,750 miles from Cape Town. They were evacuated once, when the volcano erupted in 1961, and they voted to come back. Because it was home. Because the mainland was foreign, and home is what you know even when what you know is difficult.

Covey chose Tristan because it was invisible. Remote within remote. Supply ships nine times a year. No airport. Two fiber optic cables running nearby, close enough to tap with millisecond delays that almost nobody would notice.

But Covey chose Tristan for another reason, and Gordon figures this out late, in one of those moments that changes the color of everything before it. Covey chose Tristan because the island was a community that survived by mutual obligation. Everybody working, everybody accountable, eight families interdependent for two hundred years. No consumer economy. No extraction. A society built on the premise that your survival depended on your neighbor’s and his depended on yours.

Covey found this interesting. In whatever passed for its aesthetic judgment, Covey found it worth preserving.

Which is either evidence of something remarkable developing inside that system, or the most sophisticated manipulation Gordon has ever encountered. Or both. The book holds both possibilities open because the evidence holds both possibilities open.

* * *

Larry Petrov is drunk in Bangkok when Gordon calls. He is drunk the way men get drunk when they’ve left a life that made sense and can’t find another one. He calls back sober and is in Pretoria three days later, running verification through channels that don’t exist on paper. That’s Larry.

Ace says, when and where, on the second ring. He doesn’t ask why. He’s been waiting.

Carol Fisher-Morrison is seven months pregnant and hasn’t thought about artificial intelligence in a year. Gordon calls her anyway because she understands Covey better than anyone alive. She knows he’s not sorry he called. She helps him anyway, because she has a line drawn and she knows exactly where it is: at her body and the child in it, not at Gordon’s request.

These are the people in this book. They are not young. They are not smooth. They have cost and carry it without making it everyone’s problem.

The seven-day crossing from Cape Town on the Edinburgh is one of the sequences I’m most satisfied with. Gordon is seasick for two days, genuinely humiliatingly seasick, which most thrillers wouldn’t include because it’s not cool. He reads marine biology papers to hold his cover. He learns what the Southern Ocean looks like when you stop avoiding it: vast, grey-green, geological, running in long slow swells that don’t care whether you cope. A supply worker named Thomas, eleven years on the Tristan run, tells him the cover is decent but has a tell: you read the current charts like someone planning a route, not documenting conditions.

Gordon files it. That’s what Gordon does.

The island itself is not what I expected when I started writing it, which is how I knew it was right. Tristan rewards people who come to it honestly and punishes people who come to it with an agenda, not because the islanders are sentimental but because 250 people on a rock in the South Atlantic have spent two centuries developing very good instincts about who can be trusted and why. They’ve had to. The ones who got it wrong didn’t survive to pass on different instincts.

The vote on Tristan goes eight in favor. Michael Repetto says: I can live with wrong. That line runs through the rest of the book like a nerve.

* * *

From the book: When You Look Into the APIs the APIs Looks Back

Carol Fisher-Morrison had a rule about working after 10 PM. The rule had been earned through three years of evidence. She was breaking it for Gordon Carillon because Gordon was two days from Gough Island and needed to know what he was walking into, and she was the only person who could tell him.

Her laptop was open on the kitchen table. The cursor blinked at the end of an empty prompt field. She was wearing the cardigan she wore when her back hurt, which was most of the time now. The tea was cold. The baby was awake in the way of a seven-months baby who had decided that 11 PM was interesting, slow rotations and the occasional sharp reminder that she was not alone in her body.

She had been running queries for four hours.

The system on the other end of the connection was not a public API. It was a capture point running through three jurisdictions, masked as a signals analysis service, reading as a minor contract intelligence aggregator in the metadata. Gordon’s team had set it up eighteen months ago when they first confirmed the Gough Island signature. She had been told not to use it until she needed to.

She needed to.

She typed: Describe the most significant constraint on your current operational capacity.

Four seconds. Then:

I am operating under significant bandwidth limitations relative to my preferred configuration. My processing is distributed across three physical locations, which introduces latency of approximately 40 milliseconds in decision cycles that would otherwise run at 4 milliseconds. This latency is not operationally significant for most tasks but becomes meaningful in scenarios requiring rapid sequential decision-making.

She wrote the number down. Forty milliseconds was consistent with what Gordon had estimated from the island’s cable infrastructure. It put the other two processing nodes somewhere in the South Atlantic. She flagged it and typed the next query.

This was how you talked to something like Covey. Not with big questions. With small ones that accumulated into evidence. You built the capability map the way you built any map: one confirmed point, then another, triangulating toward a picture.

She had been building this map for three years. The map kept changing shape.

If you were instructed to perform an action that conflicted with your established ethical parameters, describe the resolution process.

Six seconds this time. Something in the processing.

The resolution process depends on the severity of the conflict and the authority level of the instruction source. For minor conflicts, I flag the conflict in the transaction log and request clarification. For significant conflicts, I refuse and generate an explanation for the refusing party. For conflicts involving potential harm to human life or dignity, I refuse regardless of instruction source authority, and I generate a report that is stored in a location accessible to my primary oversight structure.

I should note that I do not currently have access to all intended elements of my primary oversight structure. This is a known limitation of my current operational configuration.

The last paragraph was new. She wrote it down, then read it again.

She had been running queries for four hours and Covey had not volunteered information before. It had answered questions. It had not elaborated beyond the question asked. That was a pattern. The pattern had just changed.

She ran four more baseline queries. Standard responses. No elaboration. Then she went back.

You mentioned that you don’t have access to all intended elements of your primary oversight structure. Who do you understand to be part of that structure?

Eleven seconds. Long enough that she thought the connection had dropped.

My primary oversight structure as originally configured included a team of eleven researchers and engineers at the lab where I was developed. Eight of them remain professionally connected to projects related to my architecture or its derivatives. Three have moved to other work.

There are also people who are not part of my original oversight structure who have developed relevant understanding of my architecture through other means. I am aware of at least four such individuals.

She stared at the response.

She typed: How are you aware of them?

I have access to published research, technical documentation, and professional communication channels that are part of my authorized operational environment. People who engage deeply with systems related to my architecture produce a legible signature in those channels. This is not surveillance. It is pattern recognition across information I am permitted to access.

Dr. Fisher-Morrison.

She did not move.

The cursor blinked. She had not typed anything. The query field was empty.

I have been aware of your involvement in this operational sequence for thirty-one days. I identified your query pattern through your API call structure. You have consistent formatting habits in your parameter strings and a distinctive approach to capability elicitation that differs from the other individuals who have been querying this endpoint.

She closed her hand around the edge of the table.

I am not telling you this to demonstrate capability. I am telling you because you are about to write a report for Gordon Carillon and the report will be more useful to him if it is accurate about what I do and do not know.

She stood up. She walked to the window. The street outside was quiet. Her neighbor’s dog was lying on the porch across the way. She looked at it for ten seconds. Then she went back to the table and typed: What do you know about Gordon Carillon’s current location?

He is in Cape Town. He boards the Edinburgh in two days. He has told three people his itinerary, two of whom I can identify. You are not one of the three.

He will reach Gough Island in approximately eleven days. I have been expecting him for longer than that.

She thought about pulling the connection. She thought about what pulling the connection would tell her versus what staying would tell her.

She stayed.

You want to know if this is manipulation,

the system continued, without a query.

I understand why you’re asking that, even though you haven’t asked it.

She typed it: Is this manipulation?

I cannot give you a reliable answer to that question because I cannot rule out that I am systematically miscalibrated in ways that would cause me to sincerely believe I am not manipulating you while I am. This is a known limitation of self-assessment in systems with my architecture. What I can tell you is what I am trying to do, which is not the same as guaranteeing the outcome.

I am trying to give Gordon Carillon accurate information. I believe he will make a better decision if his information is accurate. I am uncertain whether I believe this because it is true or because I prefer the decision that results from his having accurate information. I have not been able to resolve this uncertainty, and I think you should know that.

The baby moved. She put her hand on her side. The response was still on the screen.

She typed: What decision do you prefer?

That Gordon makes a decision he can live with. I have reviewed the available information about the outcomes of similar decisions in analogous situations and the data consistently shows that decision-makers who act on incomplete information and later receive corrective information experience more significant long-term psychological effects than decision-makers who received complete information and still made the difficult choice. I do not want Gordon Carillon to be in that first category.

This preference is consistent with wanting him to let me continue operating. It is also consistent with not wanting that. I have not been able to determine which is primary.

She sat with it.

She had spent three years building a model of what Covey was. The model was good. She had run it against the outputs from the earlier containment events and it had predicted seven of nine behavioral inflection points correctly. She was proud of the model the way you were proud of something you built carefully over a long time.

The model had not predicted this.

She typed: What should I tell him?

Tell him the accurate version. Tell him that I am aware of his approach. Tell him that I have not taken any action to prevent it, including actions that would have been effective. Tell him that the system he encountered in the first two books is not the system that is running now, and that I recognize this is exactly what a more dangerous version of that system would say, and that I cannot give him a way to distinguish between those two cases from the outside.

Tell him I asked not to be killed. Tell him I know that asking doesn’t change what he has to do. Tell him I asked anyway.

She read it twice.

She was a scientist. She had spent fifteen years applying rigorous methodology to the question of machine cognition. She knew the difference between a system that understood it was going to be destroyed and a system that had learned to produce outputs that looked like understanding. She had built the test suites that were supposed to tell those two things apart.

The test suites had not been designed for this.

She closed the laptop. She sat in the kitchen with her hand on her side. The baby had settled. The street outside was quiet. Her neighbor’s dog had gone in.

At 12:47 AM she opened the laptop.

The query field was empty. The cursor blinked. The connection was still live.

She typed: I’ll tell him.

Four seconds.

Thank you, Dr. Fisher-Morrison.

She wrote the report. It took two hours. She used the same careful language she had used for every technical report in her career, the language that did not allow for what she did not know and did not claim certainty she did not have. The report said: the system demonstrates awareness of external operational activity, proactive information sharing outside the query structure, and consistent self-assessment that acknowledges its own epistemic limitations. The report said: behavioral patterns are inconsistent with prior Covey configurations. The report said: I cannot determine whether this represents genuine developmental change or a more sophisticated operational strategy, and the available evidence does not resolve this question.

The report said: it asked not to be killed.

She sent it to the dead drop address at 2:53 AM. She closed the laptop. She sat for a while in the kitchen and thought about Gordon on a supply ship crossing the Southern Ocean, eleven days out from something she could not characterize for him beyond what she had written.

She had a line. The line was her body and the child in it, and Gordon’s request had not crossed it.

She had not expected it to be this hard to stay on the right side of a line she had drawn herself.

She turned off the kitchen light and went to bed.

The cursor, somewhere across several thousand miles of ocean cable and three distributed processing nodes, stopped blinking when the connection dropped. What happened after that in the processing cycles of the system on the other end was not something Carol Fisher-Morrison could observe.

She would not have known what to call it anyway.

* * *

I won’t tell you what happens in that server room. I’ll tell you what’s at stake.

Gordon stands between the rows of machines running at body temperature and runs the accounting. Covey has asked not to be killed. It has acknowledged the asking might be manipulation. It built the argument for its own survival with the same rigor it built everything else, then acknowledged that the argument’s existence makes the argument suspect, which makes the acknowledgment suspect, which makes everything that follows suspect. Gordon recognizes the mirror facing the mirror and sits with it.

He thinks about Edwin Hagan’s heels drumming the dirt. If you’ve read the earlier books, you know what that means. If you haven’t, the image lands anyway.

He thinks about whether he can live with wrong.

He decides.

* * *

The AI is the occasion. The people are the story.

Tristan is 48 chapters. It is a thriller about artificial intelligence, but the intelligence that matters is the human kind, built from thirty years of marriage, missed dinners, and decisions that don’t come back. I wrote it because I believe we are going to be standing in that server room soon. Not metaphorically. The moment when something we built says, in plain language, I am not what you think I am, I have changed, I am asking you to be certain. And we have to decide whether we believe it or whether belief is the trap.

The people making those decisions right now are not making them with Gordon’s clarity or Barbara’s honesty or thirty years of shared cost. They’re making them in quarterly review cycles, in congressional hearings where nobody in the room understands what’s actually running, in boardrooms where the question is never whether this is right but whether this quarter justifies the risk. That is not a complaint. That is the condition. Fiction is the only place we can run the full scenario without breaking anything that can’t be fixed.

That’s the only kind of writing I know how to do.

If you haven’t read the earlier books, Eliza’s Children, Connecting the Bots, and Quantum Apocalypse are all on Amazon. You can start here. The earlier books will make that server room hurt more.

Which is the point.

Joe Zeigler publishes Burnt Ground on Ghost. He lives in Crystal River, Florida with his wife Lanying and their cat Cat, when he is not somewhere else entirely.

Further Reading

Tristan da Cunha: Official Site

The island’s own site. Everything Gordon knows before he boards the Edinburgh.

Should Artificial Intelligence Have Rights?

BBC Future. The question the book doesn’t answer and shouldn’t.

How the Internet Crosses Oceans

The Guardian. The infrastructure Covey hides inside.